What is a Markov Chain?

A Markov Chain is a mathematical model describing transitions between states based on probabilities. Its defining property is that the future state depends only on the current state, not on past states.

Markov Property

The Markov Property is expressed mathematically as:

$$ P(X_{t+1} = s_j | X_t = s_i, X_{t-1}, …, X_0) = P(X_{t+1} = s_j | X_t = s_i) $$

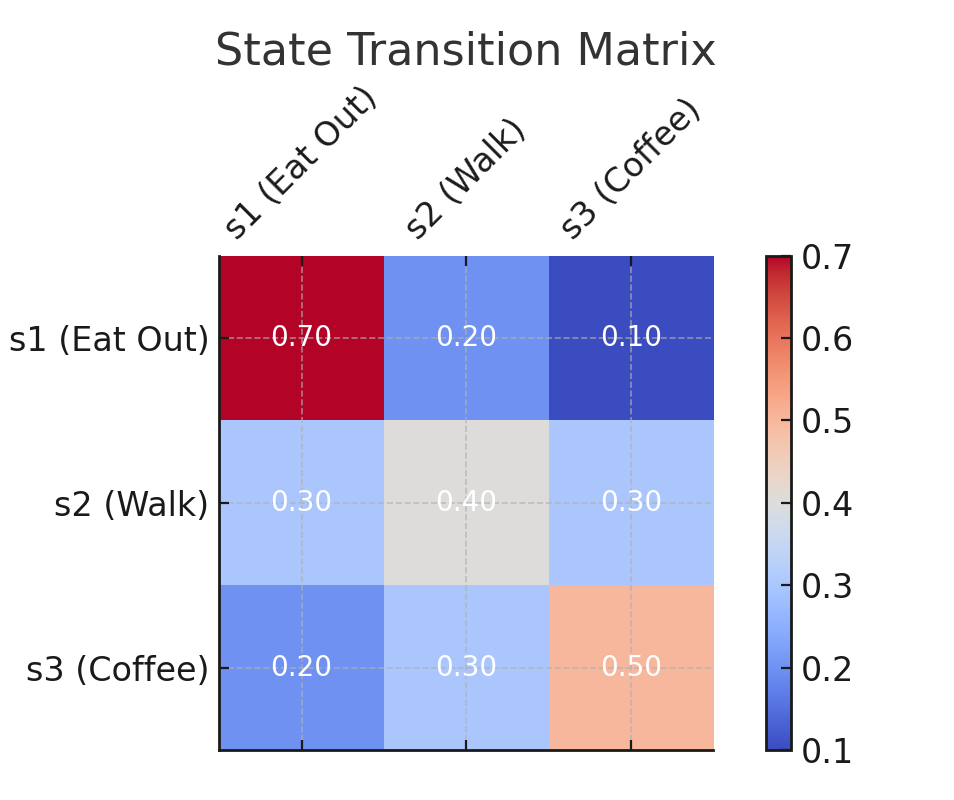

State Transition Probability Matrix

The state transitions in a Markov Chain are described by a state transition probability matrix, ( P ):

$$ P = \begin{bmatrix} 0.7 & 0.2 & 0.1 \ 0.3 & 0.4 & 0.3 \ 0.2 & 0.3 & 0.5 \end{bmatrix} $$

Each entry ( P(s_j|s_i) ) represents the probability of transitioning from state ( s_i ) to state ( s_j ). The rows of the matrix must sum to 1.

Visual Representation

Initial State Distribution

The initial state distribution is represented as a vector ( \pi ). For example:

$$ \pi^{(0)} = [1.0, 0.0, 0.0] $$

This means the system starts entirely in state ( s_1 ) (eating out).

Prediction Formula

To predict the state distribution at the next time step, use the formula:

$$ \pi^{(t+1)} = \pi^{(t)} \cdot P $$

Repeat this process iteratively to predict the distribution over multiple steps.

Practical Example: Deciding Daily Activities

Imagine you’re deciding what to do tomorrow:

- Eat out (( s_1 )).

- Go for a walk (( s_2 )).

- Grab coffee (( s_3 )).

Based on your habits:

- If you eat out today, there’s a 70% chance you’ll eat out again tomorrow, 20% chance for a walk, and 10% chance for coffee.

- Similar transition probabilities apply for other states.

The state transition matrix ( P ) and initial state distribution ( \pi ) are as shown earlier. Let’s predict your activity distribution over the next 10 days.

Python Implementation

|

|

Example Output

Running the above code produces the following output:

|

|

By the 10th day, the probabilities stabilize at ( [0.4, 0.3, 0.3] ), indicating equal chances for eating out, walking, or grabbing coffee.

Conclusion

Markov Chains are versatile and easy to implement. Whether predicting daily decisions, modeling weather patterns, or generating text, they provide a powerful framework for understanding probabilistic systems.